Three things we need to make Metaverse happen - Part 2 of the Metaverse series

I ended part 1 with a question, will Metaverse be the future? Are we as a human race ready for this transition? Will the investment in technology be there to support the environment? Getting the answer to the first two questions is difficult. They are not easy to predict, one thing is certain that it will require a big psychological and mindset shift to adapt to the metaverse, especially for the first-timers. Gamers already have the privilege of experiencing a similar (if not exact) environment today. But I think we can explore the answer to the third question regarding the technology and investment required to make metaverse possible. Experts and organizations looking to make this possible are busy extrapolating the bandwidth, latency, hardware, compute, etc. requirements to justify the investment costs to their shareholders. As I also pointed out in my last blog, technology revolutions occur in multiple periods (read more about Smihula waves) The main phases are innovation and adoption. I believe we are still in the innovation phase when it comes to the metaverse. We might have achieved a great deal with breakthroughs like “second life” and “blockchain” but enabling these technologies depends a lot on Web 3.0 integration (I will write more about web 3.0 in the future). Without web 3.0 the adoption of metaverse might not even be possible.

In this blog of the metaverse series, I want to explore the technological challenges that we need to overcome and then go on to explore the technical requirements that are must for metaverse to be available as a mass tool. Before I get started, let us understand that metaverse is not an application that runs on top of a service, it's not a world, it’s not just a game. It is the next iteration of the internet that supports real-time experiences. METAVERSE IS INFRASTRUCTURE.

Infrastructure development requires resources and time. Facebook is currently investing 20% of its workforce and $18.5B annually on Reality Labs. Mark Zuckerberg recently told Facebook employees that over the next five years he expects to transition “from people seeing us as primarily being a social media company to being a metaverse company”. In his heavily produced keynote video for Facebook Reality Labs, Zuckerberg starts by acknowledging that this is a bizarre time for the company to be launching a new product line—Facebook is under more scrutiny than ever for its ill effects on individuals and societies, and for the company’s utter unwillingness to address these issues. He’s promising future technologies that are five to 10 years off.

https://www.facebook.com/RealityLabs/videos/561535698440683

Neal Stephenson’s metaverse has been a lasting creation because it’s fictional. It doesn’t have to solve all the intricate problems of content moderation and extremism and interpersonal interaction to raise questions about what virtual worlds can give us and what our real-world lacks. Leave alone Snow Crash, but to even come close to the vision that companies like Facebook, Roblox, Microsoft, etc. are dreaming there is a huge technology burden. I have broken the technical requirements into three major compartments.

The first compartment is all about the network - it’s the bandwidth and latency of the internet to allow us to enter the virtual worlds. With the advent of 5G and satellites like Starlink, we are making that possible to an extent.

The second compartment is all about hardware. Consumer hardware or Compute hardware we have a lot to catch up on. Consumer devices such as virtual reality (VR) and augmented reality (AR) lack seamless environments. Both AR and VR use head-mounted device (HMD) technology and some people are already criticizing HMRs for their usage being isolating or anti-social. Research has shown that people who wear HMD in public are deemed to be less attractive.

The third compartment is about interoperability. It is not as famous among futurists at this point but is really important to have different virtual worlds talk to each other. It will be an uphill battle in terms of permission and technology development. The sharing of information needs to be done in a repeatable and understandable way between different systems and providers.

1. Network Requirements

When Neal Stephenson wrote snow crash, in 1992, we were living in a dial-up internet connection that had just started. As internet speeds have evolved from clunky and slow dial-up to broadband and then to fiber internet, it has led to a simultaneous rise in connectivity and is continuously blurring the lines between our physical and digital lives. Let us understand this in perspective, Internet was born in the ‘60s when the US military was looking to communicate over some kind of connected network. This led to the creation of computers and by the ‘80s computers hit the market. At the start of the 20th century, Tim Berners-Lee invented the world wide web. In 1996 first browser was developed, a 56k modem hit the market, allowing surfing speeds of 56000 bits per second which is mind-bogglingly slow. Compared to today's speed of 1000 Mbps which can download a 1 GB file in seconds, it would take multiple days to do the same at 56000 bits per second. In the 2000s broadband connection rose, it freed up the phone lines as the internet came via cable lines. It gave the ability to load more web pages quicker. Then came Wi-Fi followed by smartphones that were wi-fi enabled. It allowed us to browse pictures, load and upload them. Now we have fiber internet offering speeds up to 1gigs per second. Now imagine a world that has no downtime when working with big files so that you stream movies at a much faster speed even if the whole neighborhood is doing the same thing at that very moment. But is it sufficient for Metaverse?

Let us understand the network requirements for Metaverse. The network consists of two things – bandwidth and latency. Bandwidth means how much data can be transmitted over a unit of time. Latency means the time it takes for the data to travel from one point to another and back.

The biggest networking lesson from the pandemic was that our work from home had more than acceptable performance as long as we had cable or fiber optics connection/internet access. The current propagation delay is around ¼ of a second, i.e. the signal has to travel 22,300 miles up to the satellite and 22,300 back down. Even at the speed of light that is a ¼ second. It does not matter to us if it takes 100ms or even a 2-sec delay in sending a WhatsApp message. Even with video calls, we have a relatively high tolerance for latency. It does not matter if there is a couple of seconds delay when you hit the play button on Netflix. In fact, Netflix artificially delays the start of the video stream so that your device can download ahead of time in case your network has hiccups for a moment or two. But we never notice.

However, for AAA (a term used for video games developed by a major publisher with a huge budget for development and marketing) online games the latency requirements are very low. Because latency determines how quickly a player receives the command. It determines whether you kill or get killed. The human acceptable threshold for latency is 275ms. In AAA games the gamer gets frustrated at 50ms and non-gamers at 110ms. In US average latency is around 35ms for cities and global latency is around 100-200ms. To avoid motion sickness in metaverse you need lag times before 20ms (0.02 sec)

Metaverse requires high bandwidth and low latency environment to synchronize the facial and physical movements in the metaverse. Microsoft Flight Simulator is the most realistic and expansive consumer simulation in history. It includes 2 trillion individually rendered trees, 1.5 billion buildings, and nearly every road, mountain, city, and airport globally… all of which look like the ‘real thing’ because they’re based on high-quality scans of the real thing. But to pull this off, Microsoft Flight Simulator requires over 2.5 petabytes of data — or 2,500,000 gigabytes. There is no way for a consumer device (or most enterprise devices) to store this amount of data.

Many players struggle with network congestion today and metaverse will only intensify the needs. The good part is many companies including SpaceX’s Starlink satellite are working diligently to solve this issue. As I wrote above, the current latency from GEOS (geosynchronous satellite) is around ¼ of a second. One solution is to put forth by experts is to put the satellite in lower earth orbit called low earth orbit satellite (LEOS). SpaceX Starlink, Amazon's Kuiper promises latency around 20ms with downstream rates of 100 to 200 Mbps. The big question is how soon?

2. Hardware requirements

One of the biggest challenges to building a metaverse is to obey the rules of physics such as gravitation, particle physics, electromagnetism, light, and sound waves. Creating such an environment will have computational requirements which are the greatest in human history. Compute requirements will also be the bottleneck in what can be achieved in the metaverse. Today when Fortnite brings people/gamers together for a meet or a concert it reduces the number of participants to 50 and limits the actions they can perform.

“It makes me wonder where the future evolutions of these types of games will go that we can’t possibly build today. Our peak is 10.7 million players in Fortnite — but that’s 100,000 hundred-player sessions. Can we eventually put them all together in this shared world? And what would that experience look like? There are whole new genres that cannot even be invented yet because of the ever upward trend of technology.”

Today less than 1% of PCs and Macs can even play Microsoft flight simulators, even Microsoft Xbox series S and X consoles don’t support it yet. One solution that experts see is to concentrate all computation in the cloud. Stadia and Luna for example process all video gameplay in the cloud. They push the experience to the user as a video stream. The only requirement for a consumer device is to play this video and provide input (Press X to move left). This would mean that a 2000 dollar Alienware, to a 1500 dollar iPhone, to a fridge with a video screen in theory can play cyberpunk 2077. This is a compelling idea but will increase the latency which could be a potential blocker for the metaverse.

Another suggestion from experts is local computing. Given the intensive computational requirements of AR, it is likely that our personal/mobile devices will be able to do a good enough job. Now it may sound impressive, but no one has yet figured out how to effectively, cost-effectively and at metaverse expectations level split rendering power across multiple users at framerate and resolution required. Here let me introduce the idea of web 3.0 (decentralization of web, I will write more on it in the future) will play a major role. Blockchain will generally be the way to run programs, store data, and verify transactions. This will be a billion times faster than the computer we have on our desktops because it’s a combination of everyone’s computer

Another big challenge will be on the consumer side with delivering seamless virtual and augmented reality environments. Further, we will require power tracking cameras, sensor hardware, haptic gloves, etc. Our current technology stack is limiting in terms of what can be achieved but we have done a lot. The new iPhone now tracks 30,000 points on your face via infrared sensors. Apple object capture, in a matter of minutes, creates high-fidelity virtual objects using photos.

Apple introduces 'Object Capture' tool to turn photos into 3D models

A lot of experimentation is happening with AR/VR devices to increase the adaptability and usability of Oculus or HoloLens or google goggles. We humans can see an average of 210 degrees, Microsoft's HoloLens 2 display covers only 52 degrees (up from 34 degrees). Snaps' new upcoming glasses cover only 26.3 degrees. Another example is Google's project Starline, a hardware booth to make video conversations that make you feel like you are in the same room as the other person. It's pretty insane.

Project Starline: Feel like you're there, together

3. Interoperability

The ability for users to access their identity, records, and assets across different/all metaverse will be really important for adoption. As a gamer, you accumulate awards, a global ranking, and other assets in one game but start at zero when you enter a new game. In metaverse users’ achievements, badges, NFTs, avatars could be carried to the new world. Just as you purchase a steeler's jersey and wear it to a game, or a bar party, or Disneyland. The parallels in the first virtual world we experience in gaming today and the web are more generally striking: centralized, closed, proprietary, and extractive with shareholder supremacy over user-centricity.

The interoperability part of Metaverse is not widely discussed at this point but could have serious impacts on an equal opportunity, in world economics and that metaverse worlds local community. Implementing interoperability could also be challenging in terms of permission and technology. Axie Infinity has now proven beyond reasonable doubt what many suspected; that players will spend more money in the game when that value is freely transferable off-platform and value earntor bought is easily convertible into cryptocurrencies like Bitcoin or Ethereum. It’s what helped Axies achieve a record $1.2 in sales growing exponentially from just over 108,000 DAUs in June to more than a million DAUs today.

It is still a big challenge even on decentralized platforms to transfer assets and NFTs. Matt Ball suggests an additional on-layer service like wallets or storage locker that would overcome the need to connect every platform directly. Platforms like Discord will play a major role in such a transformation as secondary marketplaces. It has grown to over 250M users/gamers and solved the problem of limited communication. As a social communication tool, it embeds interconnection with different game franchises in an open environment. Secondary marketplaces like OpenSea also integrate with multiple decentralized worlds to the port transfer of assets as part of external ownership. Currently, users can’t import NFTs between different worlds, but they might be used to import and support NFTs from one world to another. Another important step in this direction is the Metakey project. It offers multipurpose NFTs that can be used across multiple networks. The Metakey is ONE token that can be used across multiple platforms to transform into avatars, game items, exclusive game perks, course material, virtual land access, activate discounts, and much more!

How does meta key work

Step 1: Buy a Metakey from either OpenSea or Rarible.

Step 2: Join the Discord and verify your role.

Step 3: Keep up with our Twitter or Discord to be alerted when we release new drops, discounts, integrations, events, and more!

With interoperability also comes the question of how many metaverses will be there. For what it looks like at this point, there are at least two, one dominated by closed platforms and big techs like Facebook and the other built on open protocols leveraging blockchains such as decentralized virtual land Decentraland and Sandbox. The distinction between open and closed isn't just tech choices and the extent to which platforms embrace open-source principles but more importantly do they have a closed economy, within or across their proprietary games, or whether they allow transferability of value outside of their ecosystem. Part of Fortnite's success came from the struggle to overcome traditional anti-competitive practices by being compatible across devices and operating systems. Rather than locking the user into one console version, you can play Fortnite on virtually any platform today. However, they have said they will explore a more permission/curated version of NFT like an app store.

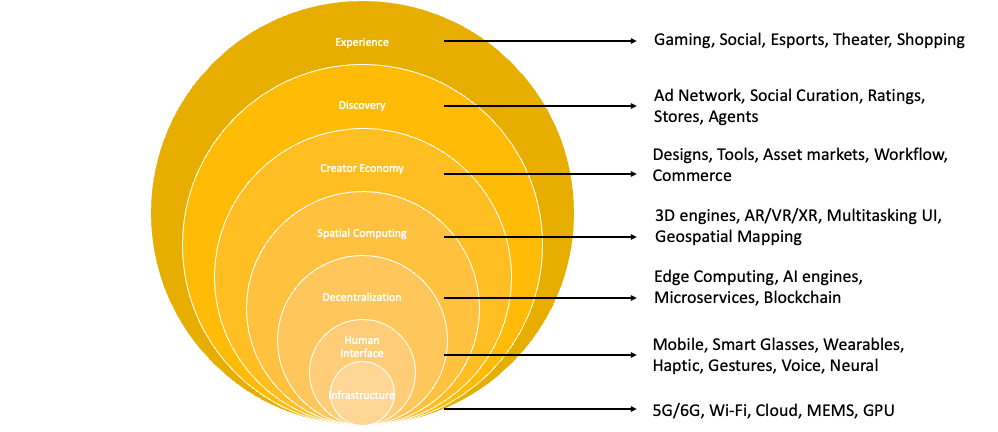

This is what we need architecturally to build a metaverse, there are other layers that are required to build a metaverse as well (Venn of Metaverse)

Experience is what we actually engage with: games, social experiences, live music, etc.

Discovery is how people learn that an experience exists.

The Creator Economy is everything that helps creators make and monetize things for the metaverse: design tools, animation systems, graphics tools, monetization technologies, etc. Read The Evolution of the Creator Economy for more insights on how these markets evolve.

Spatial Computing refers to the software that brings objects into 3D, computing into objects in the world, and allow us to interact with them. It includes 3D engines, gesture recognition, spatial mapping, and AI to support it.

Decentralization is everything that is moving more of the ecosystem to a permissionless, distributed and more democratized structure.

Human Interface refers to the hardware that helps us access the metaverse — everything from mobile devices to VR headsets to future technologies like advanced haptics and smartglasses.

Infrastructure is the semiconductors, material science, cloud computing and telecommunications networks that make it possible to construct any of the higher layers.

Outside of this, there is another technical and philosophical distinction between versions which Jamie Burke calls Low-fi and Hi-fi. Jamie Burke of Outline ventures says Lo-fi describes projects for the lowest possible devices and bandwidth requirements for universal accessibility like Cryptovoxel. Other projects such as decentraland and second life straddle in the hi-fi and low-fi battle line. He then plots hi-fi and low-fi systems on the open and closed systems as the x-axis.

According to Mr. Burke - With time, an open Metaverse built on shared open-source protocols, open infrastructure, and a single unifying (yet open) financial system will erode, or ‘eat,’ and potentially eventually replace closed platforms due to powerful network effects

The full version of the metaverse is decades away, we require extraordinary technological advancements which will produce shared persistent simulations to synchronize millions of users in real-time. It will also require a lot of regulatory involvement and change in business policies. Most importantly, it will require a change in consumer behavior. However, based on the current atmosphere it looks like we are almost there. Fortnite and Roblox are creating people's avatars and taking them on a journey in virtual space. Facebook is investing heavily in Oculus and Microsoft wants to make HoloLens (currently sold to the military for $2999 apiece) a common man's entertainment box. Let us for a moment concentrate on Fortnite and Roblox, these "games" bring together many different technologies and can produce an experience that is tangible and feels different from everything that came before and exists today. But do they really constitute metaverse?

Cheers